- 🎯 TL;DR - What Is QA Automation?

- What Is QA Automation (Really)?

- QA Automation vs Manual Testing — When Does Each Make Sense?

- Where QA Automation Fits in the Test Pyramid

- How QA Automation Actually Works (Step-by-Step Playbook)

- Step 1: Define Risk and Goals (Not Just Test Cases)

- Step 2: Decide What Not to Automate

- Step 3: Choose the Right Layer

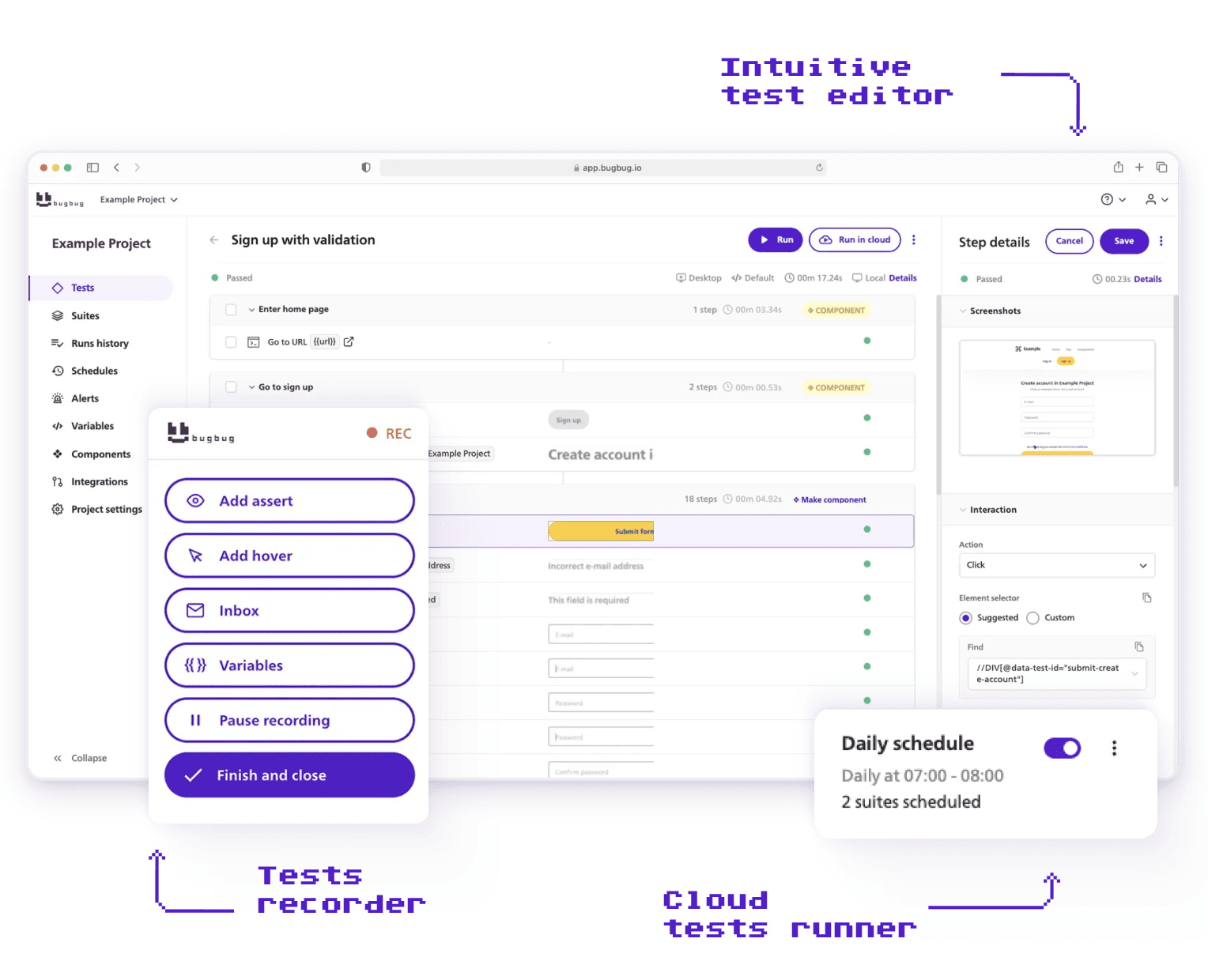

- Step 4: Choose Tooling Based on Team Capacity

- Step 5: Integrate into CI/CD Early

- Step 6: Measure What Matters

- Step 7: Treat Test Maintenance as Engineering Work

- Step 8: Continuously Improve Scope

- The Real Benefits of QA Automation (Beyond the Marketing Claims)

- The Limitations of QA Automation (No One Talks About This)

- Popular QA Automation Tools (And When to Use Each)

- Popular QA Automation Tools (And How to Choose)

- What Does a QA Automation Engineer Actually Do Day-to-Day?

- Common Mistakes That Make QA Automation Feel “Boring”

- QA Automation Best Practices for Modern SaaS Teams

- A Simple Maturity Model for QA Automation

- Final Reflection

🎯 TL;DR - What Is QA Automation?

🔑 QA Automation — Key Takeaways

What Is QA Automation?

QA automation is a risk-control system that uses automated tests to:

-

Protect release velocity

-

Increase test coverage

-

Reduce human error

-

Enable fast CI/CD feedback

It’s not just writing scripts — it’s building sustainable quality infrastructure.

When Does Automation Make Sense?

Use manual testing for:

-

New or unstable features

-

UX validation

-

Rapid iteration

Use automation for:

-

Regression suites

-

Frequent releases

-

Cross-browser/device validation

-

High-risk, repetitive workflows

Follow the Test Pyramid

-

Unit tests: Fast, cheap, isolated

-

API tests: Stable, high ROI

-

UI tests: Valuable but fragile — keep minimal

Too much UI automation = slow, flaky, expensive suites.

What Makes Automation Work

-

Risk-driven coverage (not test volume)

-

CI/CD integration with fast feedback

-

Low flakiness

-

Measured by escaped defects, not script count

-

Treated as engineering, not maintenance

Common Failures

-

Automating everything at the UI layer

-

Ignoring test suite performance

-

Allowing flaky tests

-

Measuring success by number of tests

Bad automation increases risk instead of reducing it.

Check also:

What Is QA Automation (Really)?

Most guides will tell you:

“QA automation is the process of using tools and scripts to automate software testing.”

Technically correct. Practically useless.

QA testing automation is a broader concept that refers to automating various aspects of the software testing process, while also balancing automation with manual testing to ensure comprehensive quality assurance throughout the software development lifecycle.

Here’s the clearer version:

QA automation is the practice of building repeatable systems that reduce product risk and protect release velocity. QA automation uses tools and scripts to automate software testing and evaluate functionality, performance, and security. By automating tests, QA automation increases test coverage, allowing teams to test more features and scenarios than manual testing alone. Automated testing improves accuracy, shortens release cycles, and increases overall test coverage, which is vital for Agile and DevOps workflows.

The Misunderstanding That Causes Frustration

If you’ve read developer forums or Reddit threads about automation, you’ll notice a recurring complaint:

“I feel like I’m just writing and fixing UI steps all day.”

That happens when automation is reduced to:

- Writing and maintaining automation scripts (such as Playwright or Selenium scripts), including the need to write test scripts for new features

- Fixing broken selectors after UI changes

- Debugging CI failures

- Updating test steps for every new feature

In that version of the job, automation becomes maintenance work.

But true QA automation is broader and more strategic. Effective QA automation relies on well-designed automation workflows, not just individual scripts, to streamline and manage the entire testing process.

Automation Is About Risk, Not Repetition

Yes, automation handles repetitive tasks. That’s the surface benefit. It also significantly reduces the risk of human error in repetitive or regression testing, where manual testers are more likely to make mistakes due to monotony or oversight.

The deeper value is risk control.

Every release introduces uncertainty:

- Will checkout still work?

- Did this refactor break authentication?

- Does the app still render correctly in Safari?

- Did we introduce performance regressions?

Automation gives you fast, consistent feedback on those risks.

But only if it’s built intentionally.

Bad automation increases risk:

- Flaky tests destroy trust.

- Slow pipelines block merges.

- Poor test design gives false confidence.

That’s why QA automation is not just tooling. It’s a strategy decision.

QA Automation vs Manual Testing — When Does Each Make Sense?

A common mistake in modern teams is framing automation as “better” than manual testing.

That’s the wrong comparison.

Manual and automated testing solve different problems.

The strongest teams design a hybrid strategy, where the testing team collaborates to determine the right balance between manual and automated testing for their specific needs.

The real question is: When does automation create leverage — and when does it create overhead?

Start with thorough test planning. Creating a test strategy is essential for structuring tests, organizing code, and maintaining readability and maintainability.

When Manual Testing Is the Right Choice

Manual testing is better when:

- You’re exploring a brand-new feature.

- Requirements are still changing.

- UX and usability are under evaluation.

- The feature is low-risk and rarely touched.

- You’re validating subjective behavior (visual polish, micro-interactions).

Exploratory testing, in particular, is a human skill. No framework can replicate a curious tester intentionally trying to break assumptions.

Early-stage startups often over-automate too soon.

If you’re iterating daily and changing flows constantly, heavy automation will slow you down.

When QA Automation Is Worth the Investment

Automation becomes valuable when:

- You have regression suites that must run tests every release.

- You deploy frequently (CI/CD environments).

- You support multiple browsers or devices.

- You manage large datasets or repetitive validations.

- You’ve experienced production regressions that could have been caught automatically.

For example:

If your SaaS product ships 3–5 times per week, manual regression simply doesn’t scale. Automation protects velocity. Automated testing is particularly effective for regression and smoke testing, which are run often and require swift feedback after updates.

But if your product changes completely every sprint, automation may create maintenance debt instead of value.

💡 Check our article on What Does QA Testing Do?

Where QA Automation Fits in the Test Pyramid

One of the biggest reasons automation becomes boring, fragile, or high-maintenance is simple:

Too much of it lives at the UI layer.

To understand why that’s a problem, we need to revisit the test pyramid. Understanding different testing types—such as regression, functional, API, and performance testing—is crucial for effective QA automation, as each type plays a specific role in the overall strategy.

Comprehensive testing involves combining these different testing types across the pyramid to ensure thorough coverage and higher software quality. Test automation frameworks help structure tests across these different layers, making it easier to organize and maintain a balanced automation strategy. Many automation frameworks support multiple programming languages, allowing teams to choose tools compatible with their preferred coding languages.

The Test Pyramid (And Why It Still Matters)

The test pyramid is not a theoretical model. It’s a cost model.

At the bottom:

- Unit tests — fast, cheap, isolated In the middle:

- API / service tests — validate logic and integrations At the top:

- UI / end-to-end (E2E) tests — expensive, slow, fragile

Test automation frameworks help structure tests across these different layers, making it easier to organize and maintain a balanced automation strategy.

The higher you go, the more:

- Execution time increases

- Flakiness risk rises

- Maintenance effort grows

- Debugging complexity expands

If your automation strategy is 80% UI tests, you’re operating at the most expensive layer.

That’s why so many automation engineers feel like “step writers.” They’re working where changes are most frequent and locators break most often.

Unit Tests — Developer-Owned Safety Nets

Unit tests validate small pieces of logic in isolation. Unit testing is a foundational automated testing method that ensures code correctness and system stability.

They are:

- Fast (milliseconds)

- Cheap to maintain

- Easy to debug

QA automation engineers typically don’t write these, but they should understand their coverage. If unit coverage is weak, more pressure falls on higher layers — especially UI.

A mature QA strategy collaborates with developers to ensure strong lower-level coverage.

API / Service-Level Tests — The Sweet Spot

API tests validate business logic without UI instability. Integration tests are crucial for verifying the interfaces between software modules, and application programming interfaces play a key role in this process.

They:

- Run faster than UI tests

- Avoid DOM fragility

- Catch logic issues early

- Scale well in CI

For many SaaS products, API testing provides the highest return on automation effort.

If you’re stuck writing dozens of UI flows that simply validate API behavior, consider pushing that coverage downward.

This is where automation becomes engineering rather than maintenance.

UI / End-to-End Tests — High Value, High Cost

UI tests should verify:

- Critical user journeys (checkout, login, onboarding)

- Cross browser testing to ensure compatibility across different browsers

- Full-stack integrations

They should not validate every validation message or internal rule.

UI automation is essential — but strategic.

If you automate everything at this layer, your suite becomes:

- Slow

- Fragile

- Difficult to scale

- Emotionally draining to maintain

Automated tests can simulate thousands of concurrent virtual users for load and stress testing.

The goal isn’t eliminating UI tests.

It’s minimizing them.

Non-Functional Automation — The Overlooked Layer

Automation is not limited to functional flows.

Modern QA automation also includes:

- Performance testing

- Load and stress testing

- Accessibility validation

- Monitoring and SLA verification

- Log scanning

- Static analysis

- Test data generation tools

- Security testing

This is where many senior automation engineers expand their impact.

If your role feels repetitive, expanding into these areas often reintroduces challenge and creativity.

How QA Automation Actually Works (Step-by-Step Playbook)

Most guides list generic steps like “write scripts and run them.”

That’s not how successful automation programs operate. A successful QA automation program requires a well-defined automated testing approach that outlines the methodology, tools, and best practices for effective implementation.

A cloud based testing platform is a key solution for scalable and efficient testing, enabling teams to test across numerous real devices and browsers. Using cloud-based testing platforms can help manage device fragmentation and ensure comprehensive testing across various environments.

Here’s a practical implementation framework used by effective SaaS teams.

Step 1: Define Risk and Goals (Not Just Test Cases)

Before writing a single script, answer:

- What failures hurt us most?

- What caused production incidents in the past?

- Which flows generate revenue?

- Where do regressions typically happen?

Thoughtful test creation at this stage ensures your QA automation efforts are focused on business risk, aligning automated coverage with your most critical workflows.

Step 2: Decide What Not to Automate

This step is rarely discussed.

Avoid automating:

- Frequently changing UI experiments

- One-time edge-case tests

- Exploratory workflows

- Highly visual subjective checks

Over-automation creates maintenance debt.

Strong QA automation strategies are selective.

Step 3: Choose the Right Layer

Ask for each candidate test:

- Can this be validated at unit level?

- Can this be validated at API level?

- Does this require full UI coverage?

Push coverage down whenever possible.

This reduces fragility and execution time. Automation frameworks help structure tests efficiently across these layers, making it easier to maintain and scale your QA automation strategy.

Step 4: Choose Tooling Based on Team Capacity

Tooling decisions determine long-term maintenance burden.

Choosing the right software development tools is crucial for supporting efficient automation and seamless integration with existing development workflows.

Heavy frameworks require:

- Infrastructure management

- Dependency updates

- Custom reporting integration

- Parallelization configuration

- CI optimization

Step 5: Integrate into CI/CD Early

Automation that runs manually is optional.

Automation in CI is mandatory. Continuous integration and continuous delivery rely on automated testing to ensure rapid and reliable code deployment.

Key practices:

- Run critical tests on every pull request.

- Run extended suites nightly.

- Fail builds on critical regressions.

- Keep feedback under 10 minutes whenever possible.

- Integrate automated tests for continuous testing within the CI/CD pipeline to quickly identify and address issues during development.

Speed drives adoption.

If your pipeline takes 40 minutes, developers will bypass it emotionally — even if they can’t technically.

Step 6: Measure What Matters

Avoid vanity metrics like:

- Total number of test cases

Track instead:

- Execution time

- Flakiness rate

- Escaped defect rate

- Mean time to detect regressions

- Coverage of critical flows

Analyzing test results is essential for identifying issues, measuring trends, and making informed decisions to continuously improve your QA automation processes.

Automation should reduce escaped bugs — not just increase script count.

Step 7: Treat Test Maintenance as Engineering Work

Flaky tests are not “QA problems.”

They are reliability defects.

If your automation suite:

- Breaks randomly

- Requires constant babysitting

- Is trusted by no one

It’s failing its purpose.

Regularly:

- Refactor tests

- Remove low-value scripts

- Simplify architecture

- Improve stability

Script maintenance is a continuous challenge in QA automation, as frequent application updates can break existing tests and require ongoing attention.

Test debt is real debt.

Step 8: Continuously Improve Scope

Mature automation doesn’t plateau.

Once regression coverage stabilizes:

- Expand into API testing.

- Add performance validation.

- Automate test data provisioning.

- Improve reporting visibility.

- Optimize CI runtime.

Expanding test coverage at this stage not only increases overall accuracy but also shortens release cycles by ensuring more comprehensive validation across different tests and platforms.

Automation should evolve alongside product complexity.

Try no-fuss testing for free in BugBug

Test easier than ever with BugBug test recorder. Faster than coding. Free forever.

Get started

The Real Benefits of QA Automation (Beyond the Marketing Claims)

Most articles say automation is “faster and cheaper.”

That’s technically true — but the real value shows up in how it changes delivery speed, risk management, and engineering culture.

Here’s what actually improves when QA automation is implemented correctly.

Faster Feedback Cycles

Automation accelerates time-to-market by shrinking feedback loops.

In many teams, regression cycles that once took days can be reduced to hours once automated suites are integrated into CI. That speed matters in Agile and DevOps environments, where frequent releases and continuous delivery are standard practice.

Instead of waiting for a full manual pass:

- Pull requests get validated automatically.

- Developers see failures within minutes.

- Fixes happen while context is still fresh.

Automation doesn’t just make testing faster — it makes iteration safer.

Higher Regression Confidence

Automation improves accuracy and consistency. Machines don’t forget steps or skip edge cases after a long sprint.

With strong automated regression:

- Critical flows are validated every release.

- Coverage increases across browsers, devices, and environments.

- Risk is measured instead of guessed.

Research shows that 33% of companies aim to automate between 50% and 75% of their testing efforts — not because automation replaces humans, but because repeatability increases release confidence.

Lower Long-Term Cost of Change

Automation shortens release cycles and reduces the cost of refactoring.

When regression protection is reliable:

- Teams can ship more frequently.

- Large changes feel less risky.

- Technical debt can be addressed safely.

However, this benefit only materializes when automation is stable and well-designed.

Automation does not automatically reduce cost.

Poorly architected automation increases maintenance burden and can slow teams down.

Sustainable automation lowers cost of change. Bad automation raises it.

Cross-Platform Validation at Scale

Modern applications must work across:

- Multiple browsers

- Devices

- Operating systems

Manual validation does not scale here.

Automation increases overall test coverage and enables parallel validation across environments — something that would require large manual teams otherwise.

This scalability is one reason QA remains a growth field, with QA-related roles projected to grow by 15% between 2024 and 2034. As release frequency increases, automation becomes infrastructure — not a luxury.

Developer Accountability (Shift-Left)

Automation embedded in CI/CD shifts quality left.

When tests run automatically on every commit:

- Developers see the impact of changes immediately.

- Quality becomes a shared responsibility.

- QA transitions from gatekeeper to enabler.

In modern Agile and DevOps teams, automation is not optional — it’s foundational to continuous delivery.

The Limitations of QA Automation (No One Talks About This)

Most guides sell automation as a universal solution.

It’s not.

Understanding the limitations of QA automation testing is crucial for building effective automation strategies.

Poorly designed automation programs create frustration, boredom, and technical debt — exactly the sentiment seen in countless developer forums.

Let’s talk about the real constraints.

High Initial Setup Cost

Automation requires:

- Framework selection

- Architecture design

- CI/CD integration

- Environment configuration

- Reporting setup

For small teams, this setup phase can consume weeks or months. It's important to ensure that automation setup is closely aligned with the overall software development process to maximize efficiency and long-term value.

If the product is early-stage and unstable, that investment may not pay off immediately.

Automation works best when:

- Core workflows are stable.

- Release frequency justifies regression protection.

- The team has capacity for initial setup.

Maintenance Burden

Automation is not “write once, run forever.”

Every UI change, API update, or refactor can break tests.

Regular test execution and maintenance are essential to ensure your automated tests remain effective and relevant. Automated tests should be reviewed and updated regularly to catch issues early and maintain accuracy.

Maintenance includes:

- Updating selectors

- Refactoring test logic

- Adjusting data fixtures

- Managing dependencies

- Keeping CI stable

If maintenance effort exceeds manual testing effort, automation becomes a liability.

This is often why automation feels boring — the work becomes reactive maintenance instead of strategic improvement.

Flaky Tests Destroy Trust

Flaky tests are the fastest way to kill automation credibility.

When tests:

- Fail intermittently

- Pass on rerun

- Break due to timing issues

Teams stop trusting results. Accurate test results are essential for maintaining trust in qa automation, as they ensure reliability and reflect real-world scenarios.

And when developers don’t trust automation, they stop respecting it.

Flakiness must be treated like a production defect — not a minor inconvenience.

UI Tests Are Fragile by Nature

UI tests depend on:

- DOM structure

- CSS selectors

- Rendering timing

- Frontend refactors

They sit at the most volatile layer of the stack. Dynamic user interfaces can lead to test failures in automation due to changes in element behavior or loading times.

That’s why over-reliance on UI automation leads to high churn and frustration.

A balanced pyramid reduces fragility by pushing validation to lower layers where possible.

False Sense of Security

The most dangerous limitation is psychological.

A large test suite does not equal strong coverage.

If automation:

- Misses critical business logic,

- Only validates surface behavior,

- Doesn’t reflect real user risk,

It creates illusion, not protection. Focusing on meaningful test coverage—ensuring your QA automation validates all critical paths and scenarios—is far more important than simply increasing the number of tests.

That’s why automation must be risk-driven — not volume-driven.

Why Automation Feels Boring Sometimes

When automation is reduced to:

- Updating broken selectors

- Fixing flaky tests

- Writing repetitive UI steps

- Running repetitive tests

It becomes maintenance work. Automating repetitive tests not only reduces human error and saves time, but also frees up testers to focus on new or more complex work that adds greater value.

Automation becomes interesting again when it evolves into:

- Architecture improvement

- Coverage strategy

- Performance optimization

- Tooling innovation

The difference is ownership.

Popular QA Automation Tools (And When to Use Each)

Tool debates dominate QA discussions.

But here’s the reality:

There are a wide variety of testing tools available for QA automation, each impacting test maintenance and overall software quality in different ways.

Tool choice determines maintenance burden more than test quality does.

Every modern framework can validate user flows.

The real differentiator is how much infrastructure and upkeep your team can realistically own.

Popular QA Automation Tools (And How to Choose)

Teams looking into QA automation usually ask two things:

- What tools are available?

- How do we choose the right one?

Here’s the short, practical answer.

Selenium – Web Automation Standard

Best for:

- Web browser automation

- Complex cross-browser needs

- Teams comfortable with code-based frameworks

Selenium is one of the most widely used tools for browser automation. It’s flexible and supports multiple languages, but requires framework setup and ongoing maintenance.

It’s powerful — but you own the infrastructure.

Cypress & Playwright – Modern Web Automation

Best for:

- JavaScript-heavy teams

- CI/CD-driven development

- Parallel test execution

These tools offer:

- Fast execution

- Built-in parallel testing

- Easy CI/CD integration

- Strong reporting support

They’re popular in Agile and DevOps environments where automated tests run on every commit.

Appium – Mobile Automation

Best for:

- Native and hybrid mobile apps

Appium enables cross-platform mobile testing using a single API. It’s a common choice when mobile regression testing needs automation due to its repetitive nature.

Codeless Automation Tools

Codeless tools like BugBug allow users to create automated tests without writing code. They reduce entry barriers and are especially useful when teams lack dedicated automation engineers.

Best for:

- Non-technical testers

- Small teams

- Faster setup with lower framework overhead

What All Good Automation Tools Should Provide

Regardless of the tool, modern QA automation platforms typically offer:

- CI/CD integration for continuous testing

- Parallel execution to reduce runtime

- Scheduled or event-triggered test runs

- Detailed reporting (pass/fail, logs, screenshots, trends)

- Framework support for structured test management

Good automation tools speed up testing, improve accuracy, and help catch bugs earlier in the development cycle — which shortens release cycles and improves software quality.

What Does a QA Automation Engineer Actually Do Day-to-Day?

If you search this question online, you’ll find a recurring theme:

“I’m just writing and updating UI tests all day. Is this normal?”

The honest answer: it depends on the maturity of your team and how automation is positioned in your organization.

QA automation is a collaborative effort involving QA professionals, software engineers, and software developers—each playing a key role in writing test scripts, managing automation frameworks, and ensuring software quality throughout the testing process.

There are effectively two versions of the role.

Think of them as steps on a maturity ladder.

Low-Scope Role (Script Maintenance)

In lower-maturity environments, automation engineers typically:

- Write UI test steps based on tickets

- Update selectors when the UI changes

- Fix broken locators

- Debug CI failures

- Re-run flaky tests

- Add more regression scripts every sprint

The job becomes reactive.

Product changes → tests break → you fix them.

You may not:

- Influence what gets automated

- Own coverage strategy

- Participate in architectural discussions

- Decide tooling direction

This is where automation starts to feel repetitive and mentally draining.

You’re maintaining a safety net someone else designed.

There’s nothing wrong with this phase — many teams start here.

But staying here long-term limits impact.

High-Leverage Role (Quality Engineering)

In more mature teams, automation engineers operate at a different level.

They:

- Improve test architecture and framework design

- Optimize CI pipelines for speed and stability

- Reduce flakiness systematically

- Expand coverage across layers (API, DB, UI)

- Build internal tools (test data generators, mocks, utilities)

- Manage tests and integrate them with version control systems for better tracking, updates, and collaboration

- Participate in design reviews before features are built

- Help define acceptance criteria

- Coach developers on test ownership

Here, automation is not just regression coverage.

It’s quality infrastructure.

The difference isn’t coding skill.

It’s ownership.

When automation engineers influence risk decisions, architecture, and process — the role becomes strategic instead of repetitive.

The Maturity Ladder Perspective

Most teams move through phases:

- Start with manual testing.

- Add UI automation.

- Struggle with maintenance.

- Either stagnate… or evolve into engineering-driven automation.

As teams progress up the maturity ladder, qa automation proves its value by reducing manual effort, increasing efficiency, and enabling more robust regression and cross-platform testing.

If you feel stuck writing UI scripts, it may not be a career issue.

It may simply be that your team hasn’t moved up the ladder yet.

Common Mistakes That Make QA Automation Feel “Boring”

Automation doesn’t become monotonous by accident.

It usually happens because of structural mistakes. One of the most common is not selecting the right test automation frameworks, such as data-driven, keyword-driven, or hybrid frameworks, which are essential for building a robust and maintainable QA automation process.

Automating Everything at the UI Level

When every validation is pushed into end-to-end UI tests:

- Suites become slow.

- Locators break constantly.

- Debugging becomes painful.

- Maintenance dominates time.

Automating smoke testing at the UI level is crucial for quickly assessing the stability of the software's architecture and ensuring all main functions operate as intended after updates or at the start of each test cycle.

UI-heavy automation leads directly to script churn.

Measuring Success by Number of Test Cases

More tests ≠ better coverage.

When teams celebrate:

- “We have 1,000 automated tests!”

Without asking:

- What risks do they cover?

- What escaped bugs still occur?

Automation becomes volume-driven instead of value-driven.

No Ownership of Test Strategy

If automation engineers only implement what others define:

- They lack context.

- They lack influence.

- They lack motivation.

Strategy ownership transforms the role from implementer to engineer. Effective QA automation also relies on thorough test planning, where the approach, scope, resources, and schedule are clearly defined to guide the automation process and ensure comprehensive coverage.

Treating Automation as QC Instead of QA

Quality Control = verifying after the fact.

Quality Assurance = shaping how quality is built.

If automation only checks finished features and never influences design or risk modeling, it stays reactive.

And reactive work feels repetitive.

Ignoring Performance of the Test Suite

A 45-minute pipeline kills momentum.

Slow suites create:

- Frustration

- Merge bottlenecks

- Emotional resistance from developers

Optimizing test execution—by streamlining how tests are run, scheduled, and reported—can significantly improve suite performance and reduce overall pipeline time.

Performance optimization is engineering work — and often more impactful than adding new tests.

Not Empowering Developers to Fix Tests

If QA is the only team allowed to touch tests:

- Bottlenecks form.

- Ownership becomes siloed.

- Automation becomes “someone else’s problem.”

High-performing teams distribute test responsibility. Using a version control system like Git or GitHub enables seamless collaboration on test maintenance, allowing both QA and developers to contribute and review changes efficiently.

Automation supports developers — it doesn’t isolate them.

QA Automation Best Practices for Modern SaaS Teams

Startups and SaaS teams operate under constraints:

- Limited QA headcount

- Frequent releases

- Tight budgets

- Rapid iteration cycles

Automation must be practical, not theoretical.

Start Small, Protect Critical Paths

Don’t automate everything.

Identify:

- Revenue-generating flows

- Authentication and access control

- Checkout or subscription logic

- Core user journeys

Protect what matters first.

Everything else can follow.

Keep UI Tests Minimal & High-Value

Use UI automation for:

- End-to-end validation

- Cross-browser rendering

- Critical flows

Functional testing is a key type of automated QA testing that verifies whether individual features of your application work as intended. Along with functional testing, other common types of automated QA testing include performance testing, unit testing, and smoke tests.

Push logic-heavy validation to API or lower layers whenever possible.

Minimal UI = lower maintenance.

Parallelize Early

Execution time compounds as your suite grows.

Design for:

- Parallel execution

- Segmented test groups

- Critical vs extended suites

Many QA automation tools support parallel testing, allowing you to run multiple test cases simultaneously and significantly reduce overall testing time.

Short feedback loops protect developer productivity.

Treat Flakiness as a Production Bug

If a test fails intermittently:

- Investigate immediately.

- Fix root cause.

- Review and maintain test scripts regularly to ensure reliability.

- Avoid normalizing reruns.

Flaky automation erodes trust faster than missing automation.

Review Tests Like Production Code

Test code deserves:

- Code reviews

- Refactoring

- Clean architecture

- Clear naming

- Version control discipline

- Integration with a version control system (such as Git or GitHub) for better collaboration and tracking

Poorly written tests become technical debt.

Track Escaped Defects

Ask:

- What production bugs were not caught by automation?

- Why?

- Could they have been prevented?

Analyzing test results is essential for understanding which issues slipped through automation. Tracking failure logs and screenshots during test execution helps debug problems and provides valuable context for interpreting test results.

Escaped defect tracking ties automation back to business impact.

Continuously Refactor Test Architecture

As products evolve, so should automation.

Refactor:

- Duplicate logic

- Overcomplicated abstractions

- Redundant UI flows

- Outdated frameworks

It's crucial to continuously update your automation testing strategies to align with product changes, ensuring your tests remain effective and relevant as your application grows.

Automation must evolve alongside the system it protects.

A Simple Maturity Model for QA Automation

Use this to self-diagnose where your team stands.

A maturity model provides a structured way for teams to assess and improve their qa automation testing practices. By understanding your current level, you can identify gaps and opportunities to enhance efficiency, accuracy, and integration of automation into your development workflow.

Level 1 – Manual + Basic Regression

- Mostly manual testing

- Some scripted smoke testing

- Little CI integration

- Reactive bug detection

Level 2 – UI Automation in CI

Many teams plateau here as they move from manual QA to implementing test automation at Level 2. At this stage, UI tests run in pipelines, providing basic regression protection. However, as test automation coverage grows, maintenance effort increases and flakiness begins to appear.

Level 3 – Pyramid-Based Automation

- API + UI layering

- Integration tests verify the interaction between software modules, ensuring correct communication and supporting reliable automated QA workflows

- Reduced UI dependence

- Faster pipelines

- More stable feedback

Automation becomes more sustainable.

Level 4 – Risk-Driven Automation Strategy

- Tests mapped to business risk

- Test planning ensures a structured approach, mapping automation efforts to business risk for comprehensive coverage

- Coverage decisions are intentional

- Escaped defects are tracked

- Flakiness is aggressively managed

Automation aligns with product goals.

Level 5 – Quality Engineering Culture

- Developers own testing responsibilities

- Automation engineers influence design

- Shift-left practices are standard

- CI is trusted

- Test infrastructure is treated as core product infrastructure

- Automation practices are fully aligned with the software development lifecycle

At this level, automation no longer feels like maintenance.

It feels like engineering.

Final Reflection

If QA automation feels boring, it’s usually not because automation itself is boring.

It’s because:

- Scope is too narrow.

- Ownership is limited.

- Architecture is weak.

- Strategy is unclear.

Automation becomes engaging when it protects meaningful risk and operates as infrastructure — not as a script factory.

The goal isn’t to write more tests.

The goal is to build a sustainable system that lets your team ship with confidence. Test automation plays a crucial role in achieving this by improving efficiency, coverage, and reliability throughout your development process.

Happy (automated) testing!